Содержание

- 2. Motivation Intelligent Environments are aimed at improving the inhabitants’ experience and task performance Automate functions in

- 3. Automation and Robotics in Intelligent Environments Control of the physical environment Automated blinds Thermostats and heating

- 4. Robots Robota (Czech) = A worker of forced labor From Czech playwright Karel Capek's 1921 play

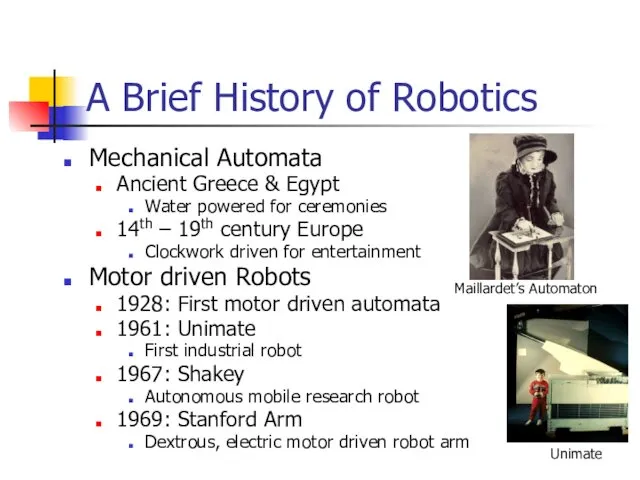

- 5. A Brief History of Robotics Mechanical Automata Ancient Greece & Egypt Water powered for ceremonies 14th

- 6. Robots Robot Manipulators Mobile Robots

- 7. Robots Walking Robots Humanoid Robots

- 8. Autonomous Robots The control of autonomous robots involves a number of subtasks Understanding and modeling of

- 9. Traditional Industrial Robots Traditional industrial robot control uses robot arms and largely pre-computed motions Programming using

- 10. Problems Traditional programming techniques for industrial robots lack key capabilities necessary in intelligent environments Only limited

- 11. Requirements for Robots in Intelligent Environments Autonomy Robots have to be capable of achieving task objectives

- 12. Robots for Intelligent Environments Service Robots Security guard Delivery Cleaning Mowing Assistance Robots Mobility Services for

- 13. Autonomous Robot Control To control robots to perform tasks autonomously a number of tasks have to

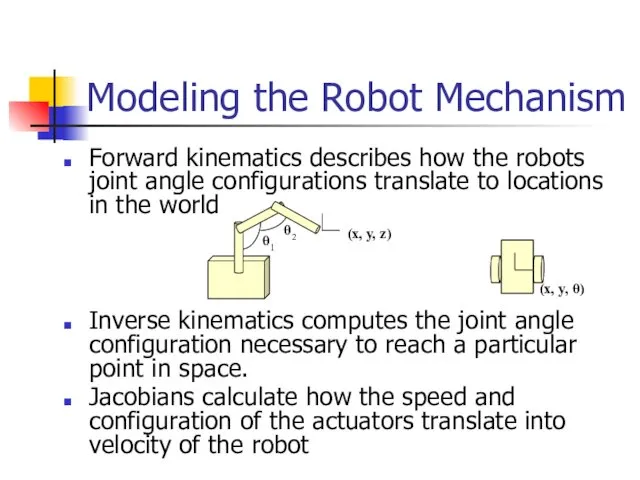

- 14. Forward kinematics describes how the robots joint angle configurations translate to locations in the world Inverse

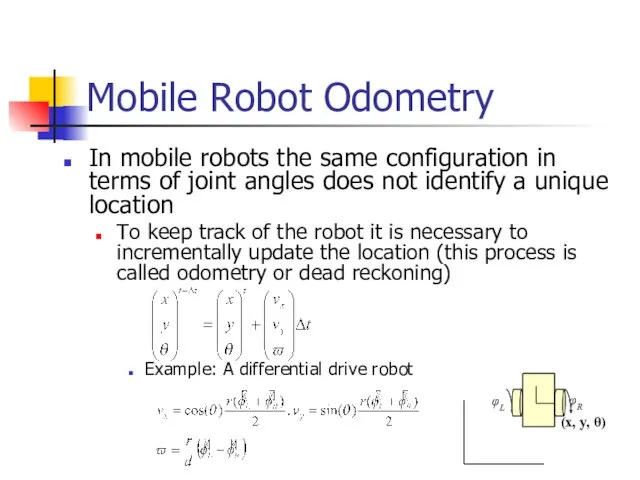

- 15. In mobile robots the same configuration in terms of joint angles does not identify a unique

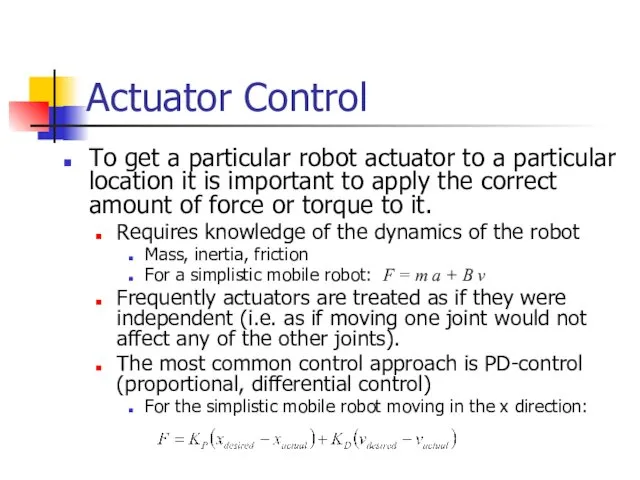

- 16. Actuator Control To get a particular robot actuator to a particular location it is important to

- 17. Robot Navigation Path planning addresses the task of computing a trajectory for the robot such that

- 18. Sensor-Driven Robot Control To accurately achieve a task in an intelligent environment, a robot has to

- 19. Robot Sensors Internal sensors to measure the robot configuration Encoders measure the rotation angle of a

- 20. Robot Sensors Proximity sensors are used to measure the distance or location of objects in the

- 21. Computer Vision provides robots with the capability to passively observe the environment Stereo vision systems provide

- 22. Uncertainty in Robot Systems Robot systems in intelligent environments have to deal with sensor noise and

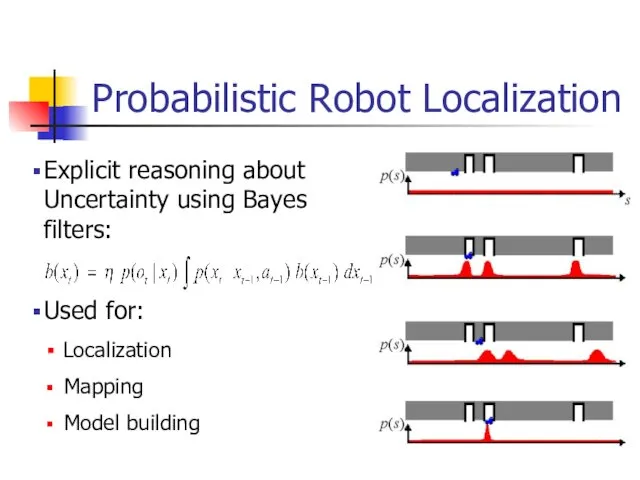

- 23. Probabilistic Robot Localization Explicit reasoning about Uncertainty using Bayes filters: Used for: Localization Mapping Model building

- 24. Deliberative Robot Control Architectures In a deliberative control architecture the robot first plans a solution for

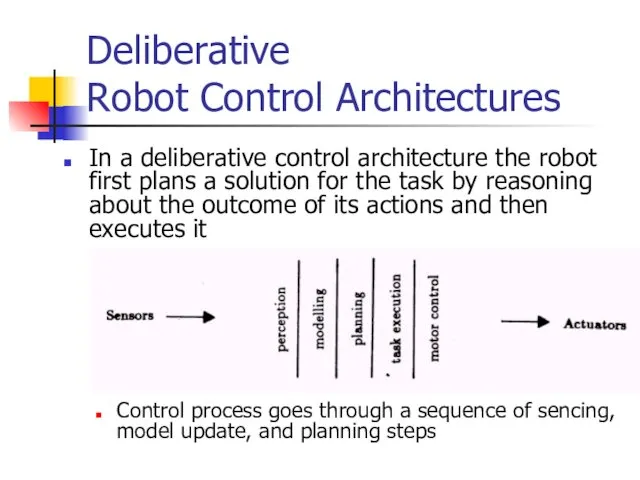

- 25. Deliberative Control Architectures Advantages Reasons about contingencies Computes solutions to the given task Goal-directed strategies Problems

- 26. Behavior-Based Robot Control Architectures In a behavior-based control architecture the robot’s actions are determined by a

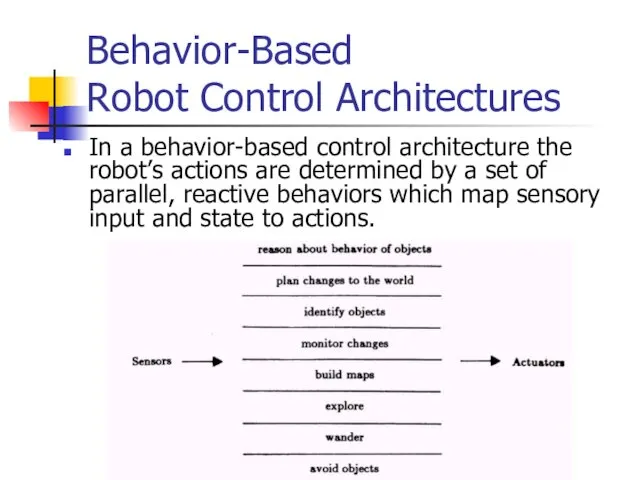

- 27. Behavior-Based Robot Control Architectures Reactive, behavior-based control combines relatively simple behaviors, each of which achieves a

- 28. Complex behavior can be achieved using very simple control mechanisms Braitenberg vehicles: differential drive mobile robots

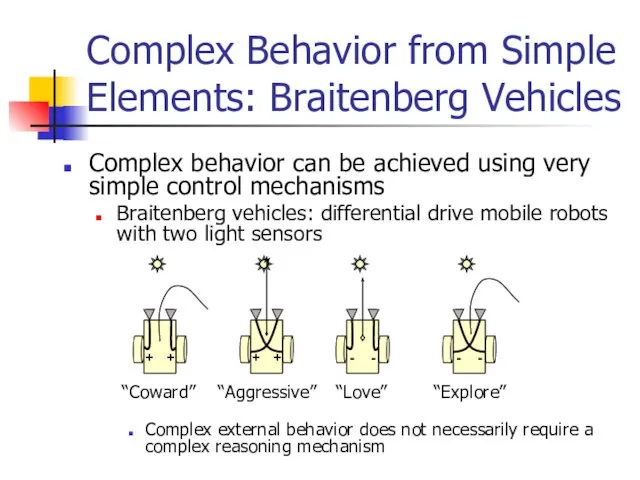

- 29. Behavior-Based Architectures: Subsumption Example Subsumption architecture is one of the earliest behavior-based architectures Behaviors are arranged

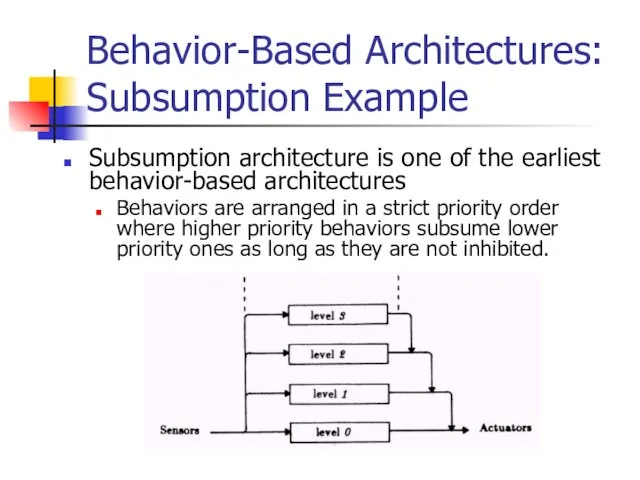

- 30. Subsumption Example A variety of tasks can be robustly performed from a small number of behavioral

- 31. Reactive, Behavior-Based Control Architectures Advantages Reacts fast to changes Does not rely on accurate models “The

- 32. Hybrid Control Architectures Hybrid architectures combine reactive control with abstract task planning Abstract task planning layer

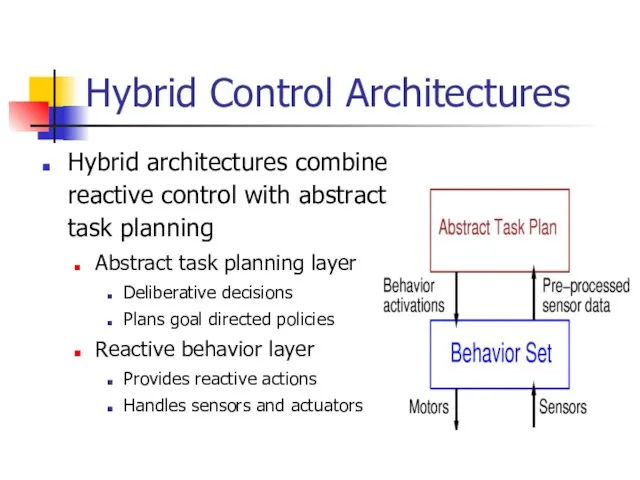

- 33. Hybrid Control Policies Task Plan: Behavioral Strategy:

- 34. Example Task: Changing a Light Bulb

- 35. Hybrid Control Architectures Advantages Permits goal-based strategies Ensures fast reactions to unexpected changes Reduces complexity of

- 36. Traditional Human-Robot Interface: Teleoperation Remote Teleoperation: Direct operation of the robot by the user User uses

- 37. Human-Robot Interaction in Intelligent Environments Personal service robot Controlled and used by untrained users Intuitive, easy

- 38. Example: Minerva the Tour Guide Robot (CMU/Bonn) © CMU Robotics Institute http://www.cs.cmu.edu/~thrun/movies/minerva.mpg

- 39. Intuitive Robot Interfaces: Command Input Graphical programming interfaces Users construct policies form elemental blocks Problems: Requires

- 40. Intuitive Robot Interfaces: Robot-Human Interaction He robot has to be able to communicate its intentions to

- 41. Example: The Nursebot Project © CMU Robotics Institute http://www/cs/cmu.edu/~thrun/movies/pearl_assist.mpg

- 42. Human-Robot Interfaces Existing technologies Simple voice recognition and speech synthesis Gesture recognition systems On-screen, text-based interaction

- 43. Integration of Commands and Autonomous Operation Adjustable Autonomy The robot can operate at varying levels of

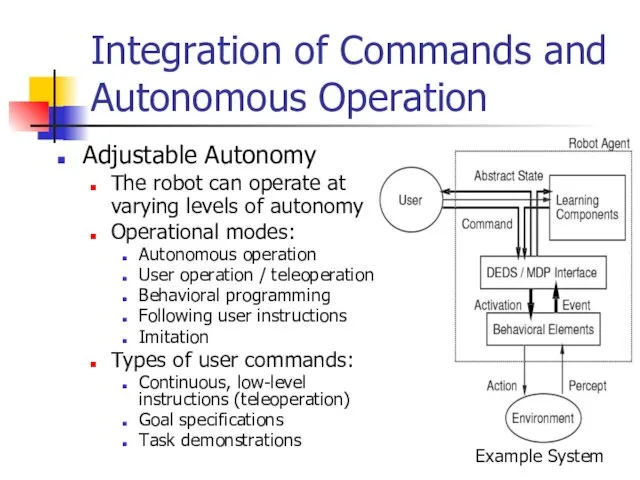

- 44. "Social" Robot Interactions To make robots acceptable to average users they should appear and behave “natural”

- 45. "Social" Robot Example: Kismet © MIT AI Lab http://www.ai.mit.edu/projects/cog/Video/kismet/kismet_face_30fps.mpg

- 46. "Social" Robot Interactions Advantages: Robots that look human and that show “emotions” can make interactions more

- 47. Human-Robot Interfaces for Intelligent Environments Robot Interfaces have to be easy to use Robots have to

- 48. Intelligent Environments are non-stationary and change frequently, requiring robots to adapt Adaptation to changes in the

- 49. Adaptation and Learning In Autonomous Robots Learning to interpret sensor information Recognizing objects in the environment

- 50. Learning Approaches for Robot Systems Supervised learning by teaching Robots can learn from direct feedback from

- 51. Learning Sensory Patterns Chair Learning to Identify Objects How can a particular object be recognized ?

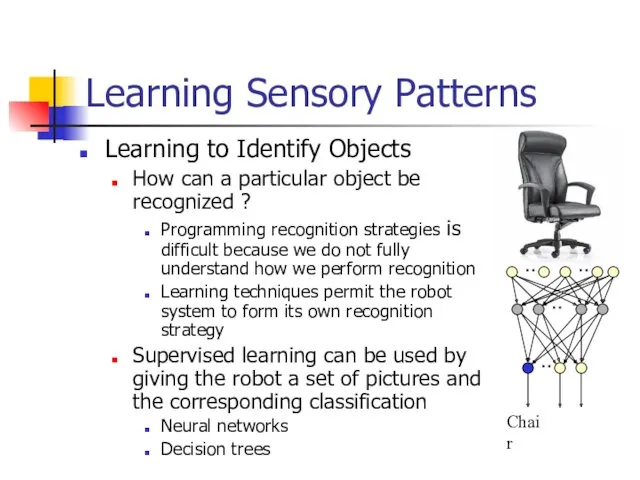

- 52. Learning Task Strategies by Experimentation Autonomous robots have to be able to learn new tasks even

- 53. Example: Reinforcement Learning in a Hybrid Architecture Policy Acquisition Layer Learning tasks without supervision Abstract Plan

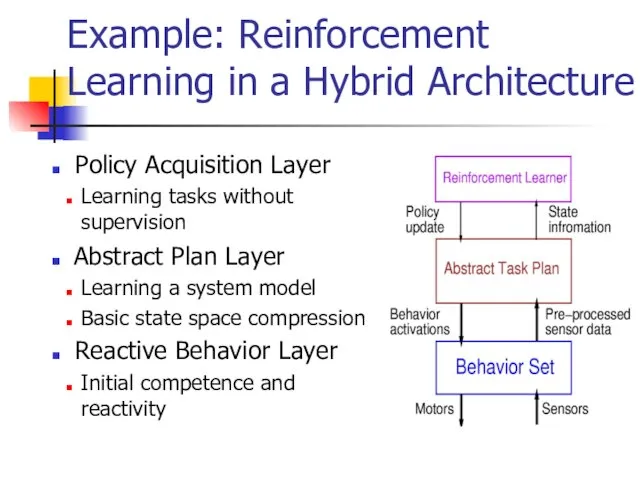

- 54. Example Task: Learning to Walk

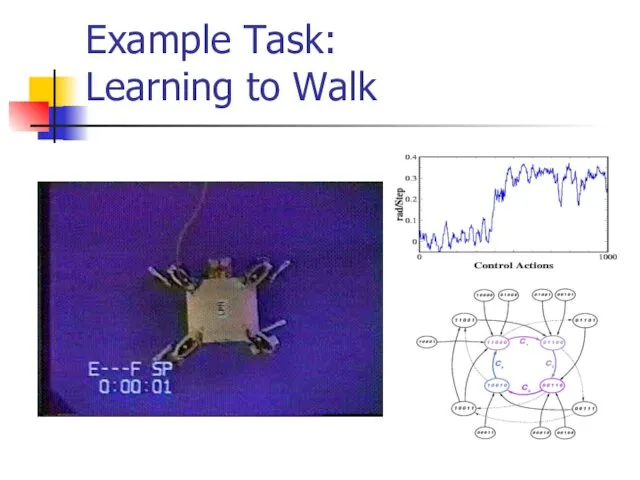

- 55. Scaling Up: Learning Complex Tasks from Simpler Tasks Complex tasks are hard to learn since they

- 56. Example: Learning to Walk

- 58. Скачать презентацию

Подходы к измерению информации

Подходы к измерению информации Social Media

Social Media Запись числа в различных системах счисления. ОГЭ - 3 (N10)

Запись числа в различных системах счисления. ОГЭ - 3 (N10) Технології програмування КС. Лекція 2 (частина 2)

Технології програмування КС. Лекція 2 (частина 2) Модульное программирование

Модульное программирование Процессорные инструкции

Процессорные инструкции 11 класс. Базы данных

11 класс. Базы данных Модели и моделирование

Модели и моделирование Операционные системы. Управление памятью

Операционные системы. Управление памятью Учимся создавать презентации

Учимся создавать презентации Киберпреступность и кибертерроризм

Киберпреступность и кибертерроризм Тип данных. Запись. (Лекция 9)

Тип данных. Запись. (Лекция 9) Анализ затрат на информационное обеспечение деятельности компании

Анализ затрат на информационное обеспечение деятельности компании Создание Web-страниц с помощью html-кода

Создание Web-страниц с помощью html-кода Рядки в Java

Рядки в Java Технологии системного анализа

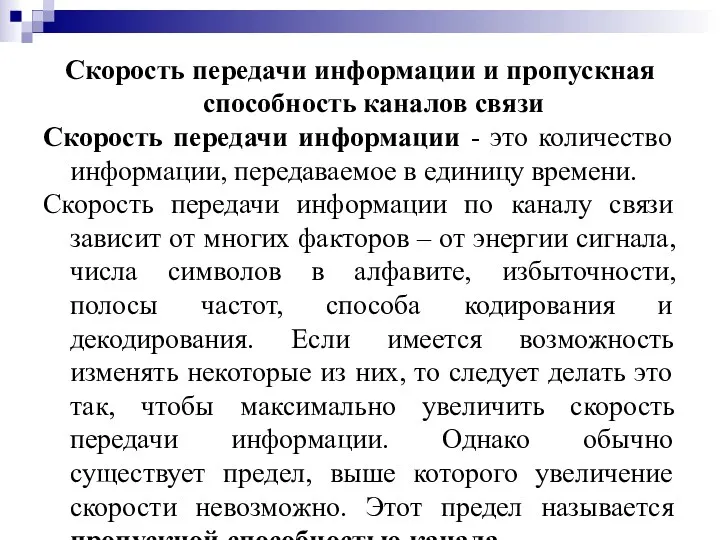

Технологии системного анализа Скорость передачи информации и пропускная способность каналов связи

Скорость передачи информации и пропускная способность каналов связи SCADA-системы

SCADA-системы Функціональна структура типової інформаційної системи для потреб оцінювання

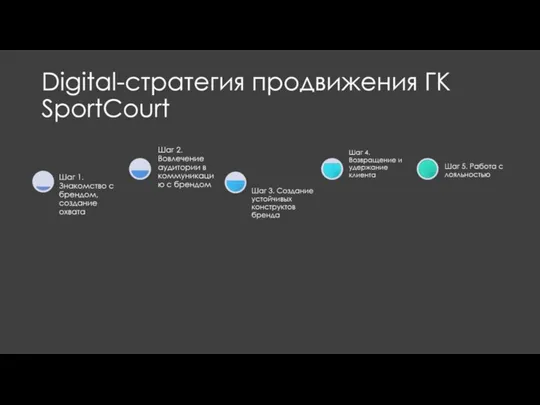

Функціональна структура типової інформаційної системи для потреб оцінювання Digital-стратегия продвижения ГК SportCourt

Digital-стратегия продвижения ГК SportCourt Сетевое сообщество учителей

Сетевое сообщество учителей Пакет офисных приложений MS Office

Пакет офисных приложений MS Office Основы социальной информатики. Информационная безопасность

Основы социальной информатики. Информационная безопасность Работа с Joomla

Работа с Joomla Презентация к уроку по учету трат_1

Презентация к уроку по учету трат_1 Adaptive libraries for multicore architectures with explicitly-managed memory hierarchies

Adaptive libraries for multicore architectures with explicitly-managed memory hierarchies Объекты ядра Windows

Объекты ядра Windows Сетевая этика. Культура общения в сети

Сетевая этика. Культура общения в сети