Слайд 2

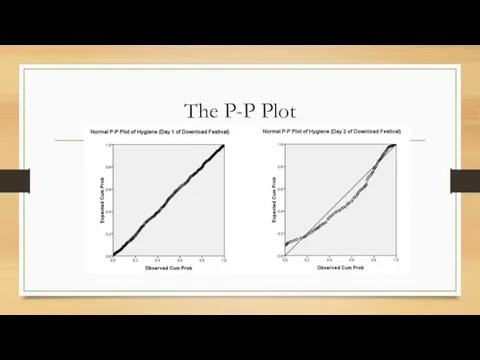

Слайд 3

Assumptions

Parametric tests based on the normal distribution assume:

Additivity and linearity

Normality something or other

Homogeneity of Variance

Independence

Слайд 4

Additivity and Linearity

The outcome variable is, in reality, linearly related to

any predictors.

If you have several predictors then their combined effect is best described by adding their effects together.

If this assumption is not met then your model is invalid.

Слайд 5

Normality Something or Other

The normal distribution is relevant to:

Parameters

Confidence intervals around a parameter

Null hypothesis significance testing

This assumption tends to get incorrectly translated as ‘your data need to be normally distributed’.

Слайд 6

When does the Assumption of Normality Matter?

In small samples – The

central limit theorem allows us to forget about this assumption in larger samples.

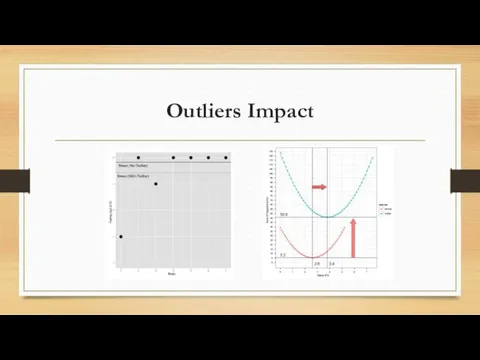

In practical terms, as long as your sample is fairly large, outliers are a much more pressing concern than normality.

Слайд 7

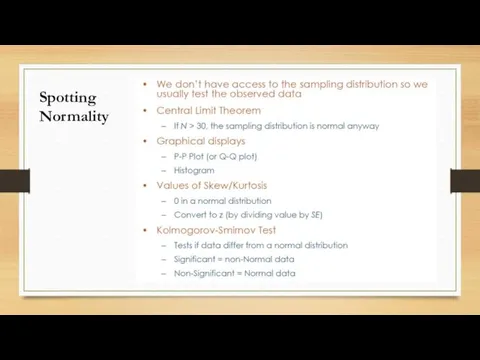

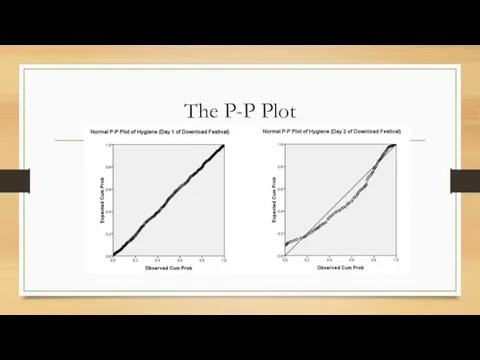

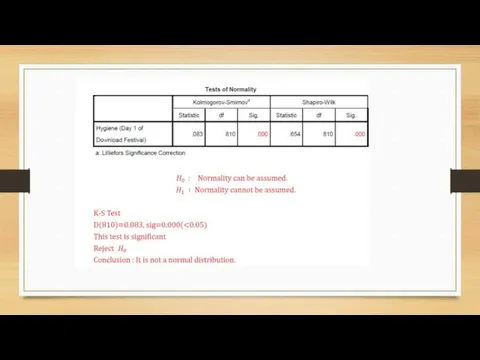

Слайд 8

Слайд 9

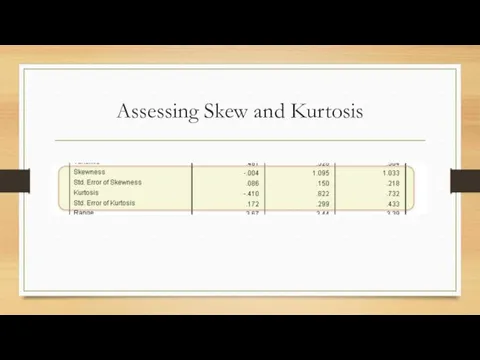

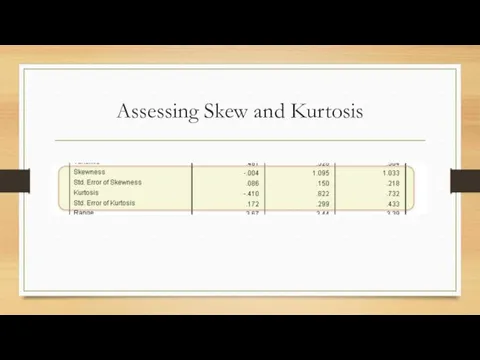

Assessing Skew and Kurtosis

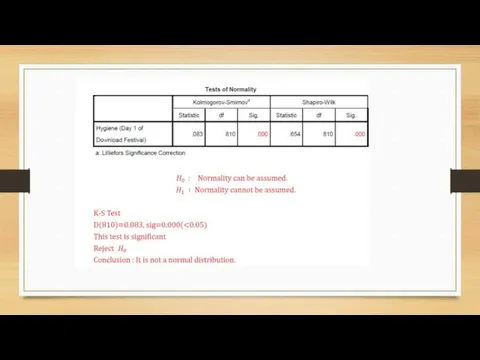

Слайд 10

Слайд 11

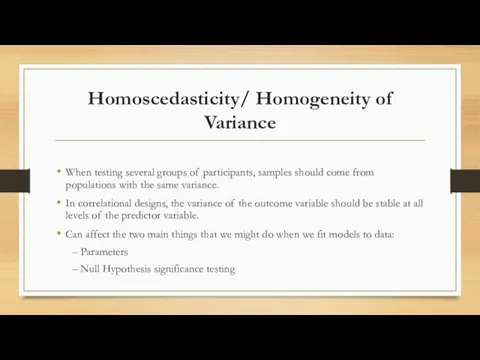

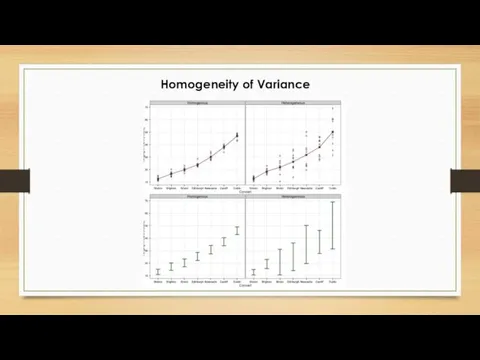

Homoscedasticity/ Homogeneity of Variance

When testing several groups of participants, samples

should come from populations with the same variance.

In correlational designs, the variance of the outcome variable should be stable at all levels of the predictor variable.

Can affect the two main things that we might do when we fit models to data:

– Parameters

– Null Hypothesis significance testing

Слайд 12

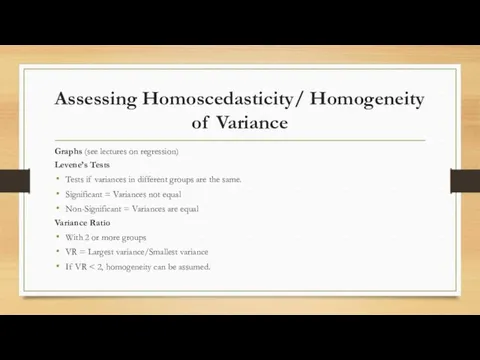

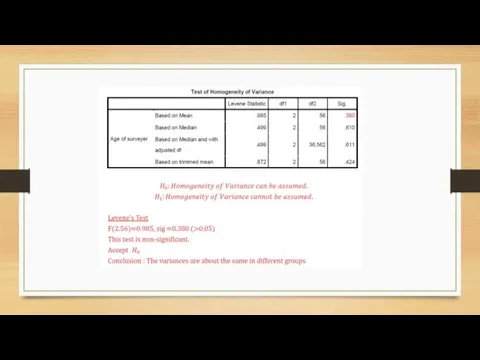

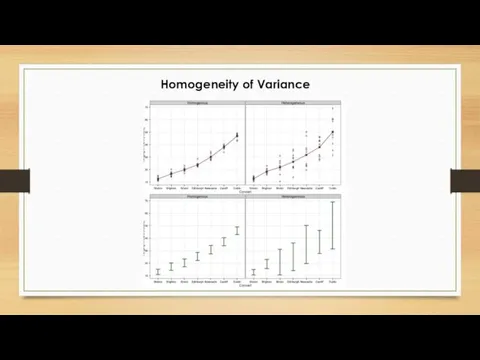

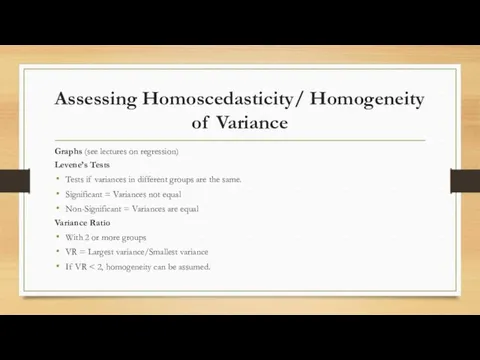

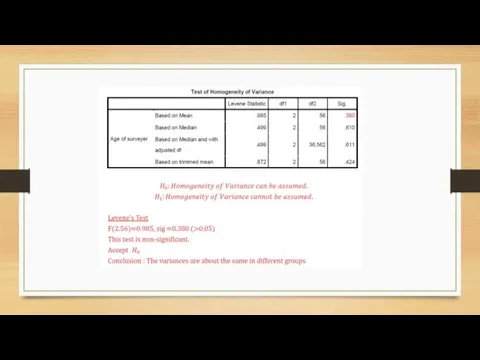

Assessing Homoscedasticity/ Homogeneity of Variance

Graphs (see lectures on regression)

Levene’s

Tests

Tests if variances in different groups are the same.

Significant = Variances not equal

Non-Significant = Variances are equal

Variance Ratio

With 2 or more groups

VR = Largest variance/Smallest variance

If VR < 2, homogeneity can be assumed.

Слайд 13

Слайд 14

Слайд 15

Independence

The errors in your model should not be related to

each other.

If this assumption is violated: Confidence intervals and significance tests will be invalid.

Слайд 16

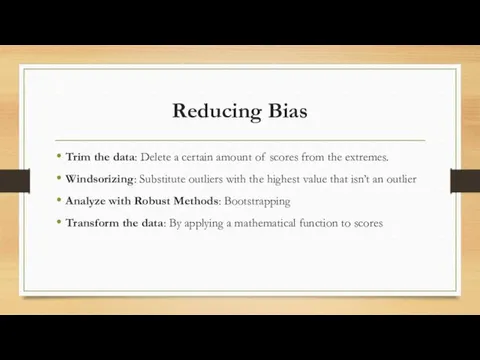

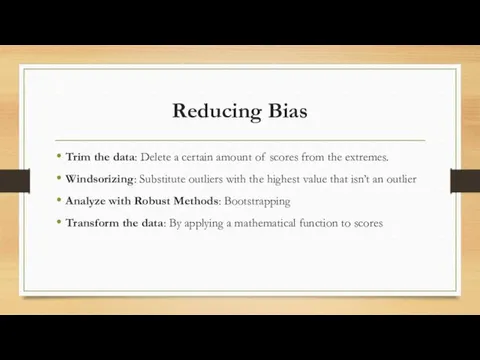

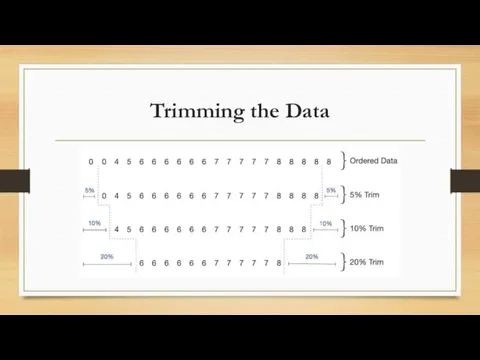

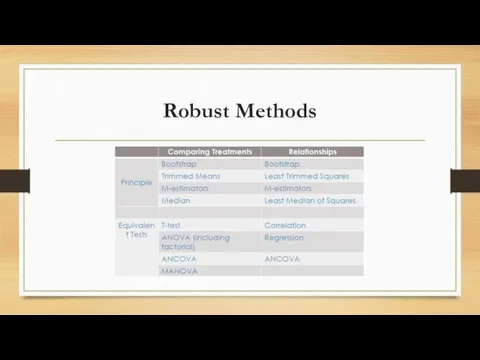

Reducing Bias

Trim the data: Delete a certain amount of scores from

the extremes.

Windsorizing: Substitute outliers with the highest value that isn’t an outlier

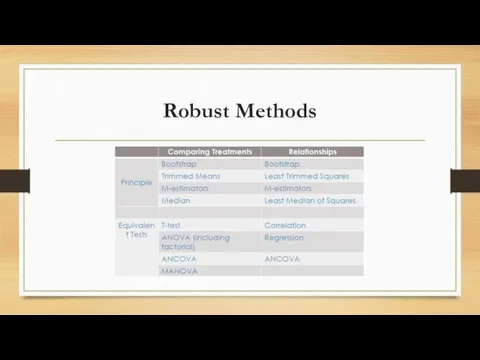

Analyze with Robust Methods: Bootstrapping

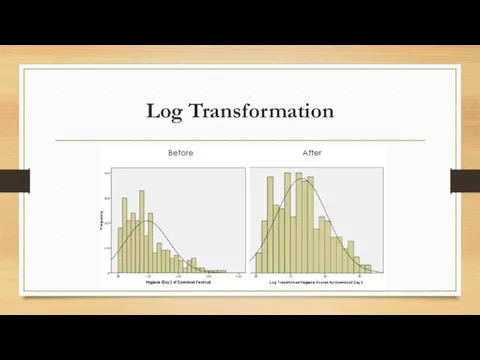

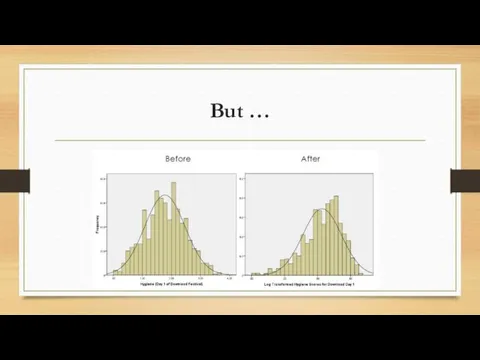

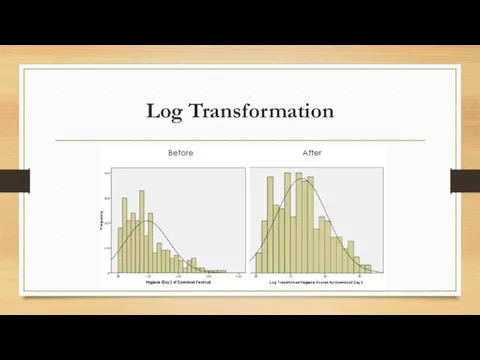

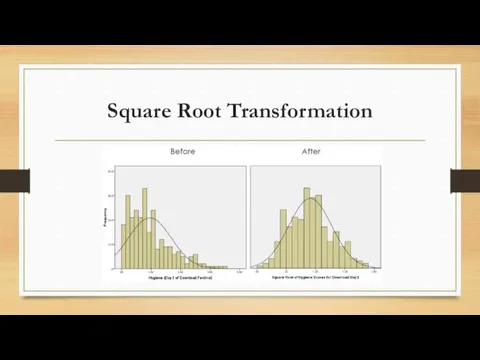

Transform the data: By applying a mathematical function to scores

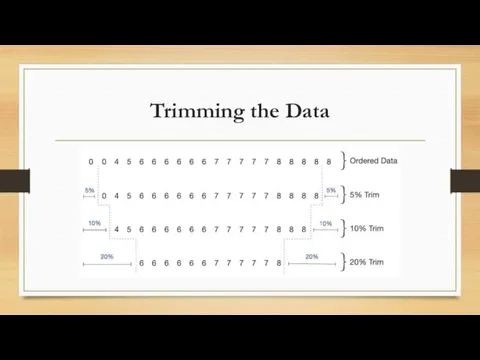

Слайд 17

Слайд 18

Слайд 19

Слайд 20

Слайд 21

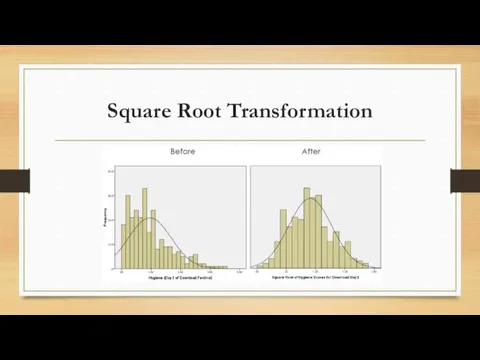

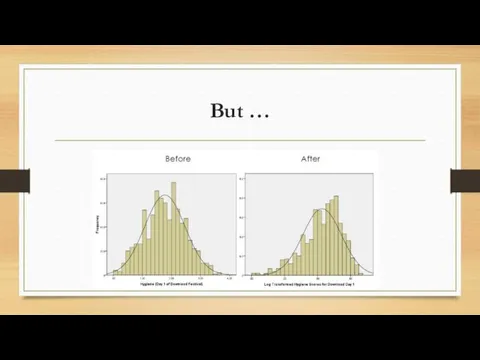

Square Root Transformation

Слайд 22

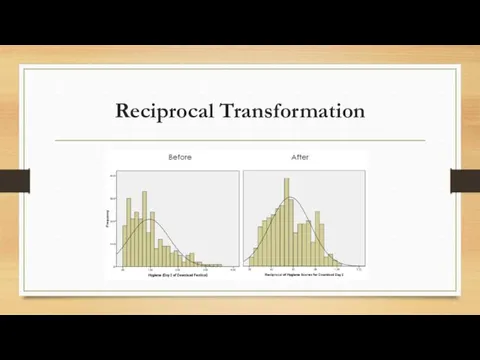

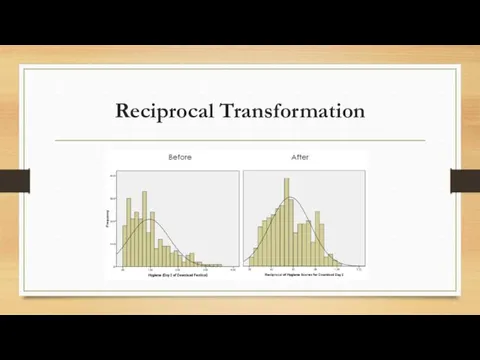

Reciprocal Transformation

Слайд 23

Определители второго и третьего порядка

Определители второго и третьего порядка Прямоугольные треугольники и их свойства

Прямоугольные треугольники и их свойства Устные приемы умножения и деления чисел от 1 до 1000; 3 класс. Технологический приём Универсальный тренажёр

Устные приемы умножения и деления чисел от 1 до 1000; 3 класс. Технологический приём Универсальный тренажёр Приём вычитания вида 15 -

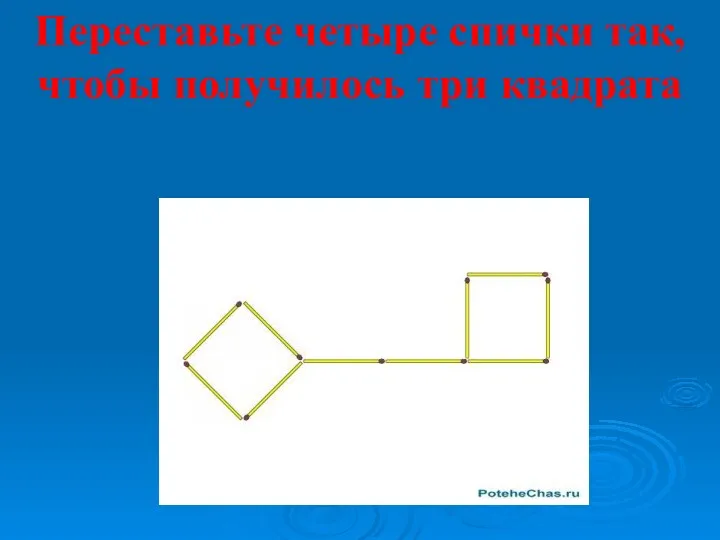

Приём вычитания вида 15 - Игры со спичками

Игры со спичками Математика .Презентация Устный счёт.

Математика .Презентация Устный счёт. Деление с остатком

Деление с остатком Interval Discrete Equation as a Model of Soil and Groundwater Contamination by Nitrogen

Interval Discrete Equation as a Model of Soil and Groundwater Contamination by Nitrogen Понятие о доказательной медицине. Случайное событие. Определение вероятности. Лекция 2

Понятие о доказательной медицине. Случайное событие. Определение вероятности. Лекция 2 Линейное уравнение с двумя переменными, его график, примеры решения уравнений в целых числах

Линейное уравнение с двумя переменными, его график, примеры решения уравнений в целых числах Умножение одночленов

Умножение одночленов Грецькі вчені-математики

Грецькі вчені-математики Диференціальне числення. Похідна функції (лекція 1.2)

Диференціальне числення. Похідна функції (лекція 1.2) Уравнения и неравенства. Упражнение 2

Уравнения и неравенства. Упражнение 2 Личностно ориентированные технологии на уроках математики

Личностно ориентированные технологии на уроках математики Задачи на построение сечений тетраэдра и параллелепипеда

Задачи на построение сечений тетраэдра и параллелепипеда Презентация Прямоугольник, квадрат

Презентация Прямоугольник, квадрат волшебная полянка 1

волшебная полянка 1 Моя страничка на proshkolu.ru

Моя страничка на proshkolu.ru Передбачення результату виконання алгоритму

Передбачення результату виконання алгоритму Linear Regression and Correlation Analysis

Linear Regression and Correlation Analysis Презентация по математике для 1 класса УМК Школа России. По теме: Слагаемые. Сумма.

Презентация по математике для 1 класса УМК Школа России. По теме: Слагаемые. Сумма. Теорема Пифагора

Теорема Пифагора Рациональные уравнения

Рациональные уравнения Умножение дробей

Умножение дробей Движение:Скорость,время,расстояние.

Движение:Скорость,время,расстояние. Статистические характеристики. Основные статистические характеристики. 7 класс

Статистические характеристики. Основные статистические характеристики. 7 класс Четырехугольники. Геометрия 8 класс

Четырехугольники. Геометрия 8 класс